One of the most important things kids are taught these days in school is that words and emotions matter. My son was being taught as early as kindergarten about healthy ways to express how he felt and why his choice of words mattered. It appears similar training may be needed for John Matze, the CEO of Parler.

It’s important to note that I’m not an attorney. The extent of my constitutional knowledge was an undergrad-level constitutional law course taken when I almost majored in political science. However, I did major in philosophy, and it doesn’t take an expert in law or philosophy to understand that the responsibilities and expectations around social media changed dramatically last Wednesday during the assault on the Capitol.

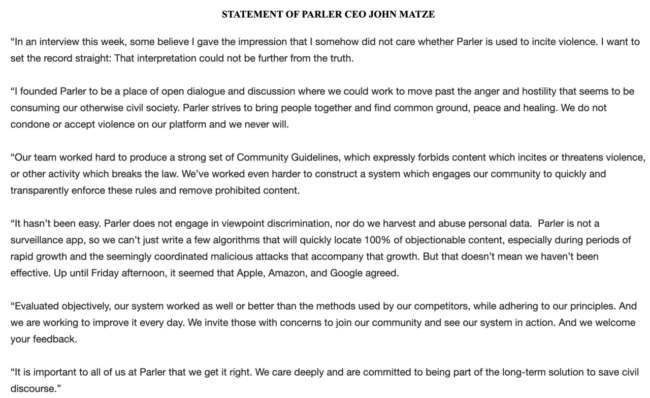

Here is the statement emailed to us from John Matze, the CEO of Parler. I’ve added my thoughts under each bolded line quoted from his statement.

In an interview this week, some believe I gave the impression that I somehow did not care whether Parler is used to incite violence. I want to set the record straight: That interpretation could not be further from the truth.

That’s nice. It’s important when making publicly traceable comments to ensure you don’t actually advocate violence.

I founded Parler to be a place of open dialogue and discussion where we could work to move past the anger and hostility that seems to be consuming our otherwise civil society. Parler strives to bring people together and find common ground, peace and healing. We do not condone or accept violence on our platform and we never will.

Great! So you’ve obviously banned all the groups who planned the assault on the Capitol, right?

Our team worked hard to produce a strong set of Community Guidelines, which expressly forbids content which incites or threatens violence, or other activity which breaks the law. We’ve worked even harder to construct a system which engages our community to quickly and transparently enforce these rules and remove prohibited content.

Okay, so … that’s great. Except it appears the mass purging and banning was of people who DID NOT agree with the rhetoric being shared on Parler. That’s not quite what people were mad about.

It hasn’t been easy. Parler does not engage in viewpoint discrimination, nor do we harvest and abuse personal data. Parler is not a surveillance app, so we can’t just write a few algorithms that will quickly locate 100% of objectionable content, especially during periods of rapid growth and the seemingly coordinated malicious attacks that accompany that growth. But that doesn’t mean we haven’t been effective. Up until Friday afternoon, it seemed that Apple, Amazon, and Google agreed.

A few things to consider:

First of all, yes, up until Wednesday, things were different. But when it became clear that people shifted from talk to action, you have to shift your approach because clearly, your users had a hard time differentiating discussion from action. And what “Malicious attacks” is he talking about? Is he implying that bad actors encouraged Parler users? If so, then moderation might have also caught those guys, but also it’s on the users who acted upon what they read to be responsible as well.

Second, no one’s asking them to bang out a few algorithms. Plenty of places on the internet use this amazing thing called human moderation, where actual people read the messages and determine if they’re appropriate. In fact, Facebook employs a whole team of them. Many websites set their own rules for what is appropriate and appoint someone, often called a “moderator,” to enforce those rules. I love to post on a site dedicated to snarking about television shows, and there are hard rules about not discussing politics. An actual person will go in and delete your post if you break the rules, rules that are agreed to when you sign up for the site. It’s not a complex concept, and it’s one that, amazingly enough, requires very little in the way of fancy “algorithms,” just common sense and a list of specific rules.

Amazing how that works, right? It’s sort of like when you’re a kid, and the older elementary school kids get little badges and get to be “safety patrol,” teaching the younger ones not to run into traffic or climb up walls. See, that’s another reason why a repeat stint in kindergarten might be helpful!

While Parler might not have wanted to pay for moderation and algorithms, they probably should have considered paying for better security, since most of their data has been harvested and scraped since their shutdown. If you were on Parler, either as an actual user or even just as someone who was lurking and monitoring the things that were being posted, you might want to change your passwords and lock down your online identity because clearly, Parler didn’t do a great job of locking up that data.

Evaluated objectively, our system worked as well or better than the methods used by our competitors, while adhering to our principles. And we are working to improve it every day. We invite those with concerns to join our community and see our system in action. And we welcome your feedback.

That’s a nonsensical statement. There’s no way to measure how your methods work versus others because you’re not that old of a social network. You only had a few hundred thousand users until the middle of 2020, so it’s impossible to know if your methodology scales appropriately. I also doubt that average users who join Parler would get a full report on how your moderation methods work versus those at Facebook and Twitter. Also, you’re not stating who your competitors are. If your competition is Facebook, no, you’re probably not doing better. If your competitor is 8kun (formerly 8chan), sure, but that’s like saying you’re a model citizen because you’re in a minimum-security prison instead of max.

It is important to all of us at Parler that we get it right. We care deeply and are committed to being part of the long-term solution to save civil discourse.

Fantastic. Now define civil discourse. Do you mean a discussion about whether federal or state governments have more obligation to provide infrastructure services, or whether we should take a hawkish or dovish approach to fiscal stimulus? Because that sounds like good, healthy, liberal vs. conservative civil discourse. Or do you mean we have to be civil to people who are openly anti-Semitic, racist, and homophobic? Because no, that’s not civil discourse. And thanks to the internet and the folks at Reddit and the ADL, there are plenty of screenshots proving fully well that Parler was, in fact, a cesspool of hatred and not a polite place of discourse. And well, here we are.

WASHINGTON DC, DISTRICT OF COLUMBIA, UNITED STATES – 2021/01/06: Protesters seen all over Capitol building where pro-Trump supporters riot and breached the Capitol. Rioters broke windows and breached the Capitol building in an attempt to overthrow the results of the 2020 election. Police used batons and tear gas grenades to eventually disperse the crowd. Rioters used metal bars and tear gas as well against the police. (Photo by Lev Radin/Pacific Press/LightRocket via Getty Images)

It comes down to this — Parler is a living example of why the tolerance paradox by Karl Popper is so important right now. The Tolerance Paradox theory states that any just society must be intolerant of intolerance because otherwise, intolerance can run rampant over all other opinions. A just society needs to draw a line in the sand and state a baseline that cannot be crossed. And it’s not a baseline that’s created through vague terms like “we support civil discourse”; it’s created by saying “any violent talk is banned.”

What’s especially mind-boggling is that Apple, Google, and Amazon told Parler to implement moderation, and they refused — even a few steps forward could have allowed them to continue. But maybe this is better because a company that so completely misunderstands how free speech works, and the idea that free speech does not mean words are meaningless and therefore can’t be regulated, doesn’t necessarily deserve to exist.

If I wanted political commentary, I would go to CNN or Fox News. First time – Shame on you, Second time – shame on me for continuing to visit your site.

You will be missed.